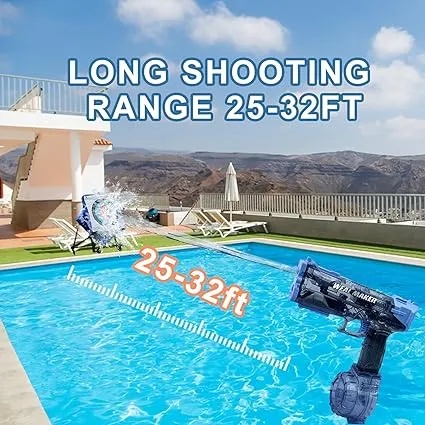

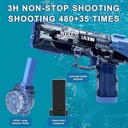

مسدس الماء الكهربائي WEAL MAKER بمدى طويل من 26 إلى 39 قدم، بطارية 1200mAh، خزان كبير إضافي، مقاوم للماء ودائم - أزرق |W555|

95.00 95.00

90.48

مسدس الماء الكهربائي WEAL MAKER بمدى طويل من 26 إلى 39 قدم، بطارية 1200mAh، خزان كبير إضافي، مقاوم للماء ودائم - أزرق |W555|

لاعضاء ال vip

95.00

incl. VAT

95.00

وفر

0.00

0% خصم